"Experiment or die, there is no try."

"Experiment or die, there is no try."

That was my call to action, Yoda inspired, last week to a group of international C-level executives. And I meant every word of it.

There is a tendency to think experimentation and testing is optional. Ouch!

I fundamentally believe that is wrong. For a few simple reasons:

# 1 It's Not Expensive!

You can start for free with a superb tool: Google's Website Optimizer. It is packed with enough features that I have no qualms flogging it (even though I work closely with the team!).

If you want to help our economy and pay for your tools then that is absolutely fabulous. Both Offermatica and Optimost are pretty nice options.

[Just don't fall for their bashing of all other vendors or their silly claims, false, of "superiority" in terms of running 19 billion combinations of tests or the bonus feature of helping you into your underwear each morning.

You'll be lucky if you can come up with 5 combinations, and it is not that hard to put on your underwear.

Look for actionable uniqueness. For example I am quite fond of the fact that with Offermatica you can "trigger" tests based on behavior. That is nice, well worth paying for.]

# 2 Six And A Half Minutes. That's it!

Tom has tried this with many many Marketers, and its so true: If you have two different pages you want to test, it takes six and a half minutes for you to configure, test (QA) and launch a A/B test.

[Please read that literally, as it is written. You have two pages already. 6.5 mis to: Configure. QA. Launch.]

You have six and half minutes right?

I cannot recommend enough the wisdom of starting with a A/B test.

You will start fast, you will find enough problems in your company, you can show easy wins.

Aim to get to the thing vendors are selling, MVT, but start with A/B regardless of the tool you use.

# 3 Show 'em You Are Worth It.

There is a lot of pressure on all of us to prove our worth and make significant improvements to our web business.

ClickStream analysis with Omniture or Google Analytics or ClickTracks is well and good, testing will get you on the path of taking having a direct impact faster.

By the nature of it Testing is action oriented, and what better way to show the HiPPO's that you are awesome then by moving the dial on that conversion rate in two weeks?

# 4 Big Bets, Low Risks, Happy Customers.

Very few people appreciate this unique feature of testing: You have an ability to take "controlled risks".

Let's say you want to replace your home page with pictures of naked people, yes in the quest of engagement . : ) Naked people are risky, even if they are holding strategically placed Buy Now buttons.

So run a test where only 10% of the site traffic sees version B (naked people).

You have just launched something risky, yet you have controlled the risk by reducing exposure of the risky idea.

Stress this idea to your bosses, the fact that testing does not mean destroying the business by trying different ideas. You can control the risk you want to take.

# 5 Tags, CMS, Reports & Regressions: All Included!

Pretty much all Testing tools are self contained, simple to launch (A/B is brain dead easy, MultiVariate needs your brain to be awake – that's not hard is it?), they contain all reporting built in and the data is not that hard to understand.

So you don't have to worry about integrations with analytics tools, you don't have to worry about rushing to get a PhD in Statistics to interpret results and what not.

You will hear super lame arguments about mathematical purity or my factorial is better and the other guy's whatever. Ignore. It will take you a while to hit those kinds of limits. And the nice thing is by then you'll be smart enough to make up your own mind.

What's important is you start. Do that today. Think of this as dating and not a marriage. You are allowed to make mistakes. You are not going to marry the first guy you run into. Don't take that approach here.

So agree with me? This is attractive? Right?

Think about it this way. If your analytics career is flagging then testing is the Viagra you need to take.

Seriously.

: )

So as my tiny gift for you here are five experimentation and testing ideas for you. I'll try to go beyond the normal stuff you hear at other sources.

# 1 Fix The Biggest Loser, Landing Page. (& Be Bold.)

Now all that is well and good. But the sad thing in a common mistake people make is get excited and then go try to test Add To Cart buttons. Or three different hero images on the home page.

That's all well and good. But honestly that's not going to rock your boat. [Remember you are on Viagra!]

For your first test be bold, try something radical, bet big. I know that sounds crazy. But remember you can control risk.

If you start with a A/B test with some substantial difference then you can show value of testing faster because you'll get a signal faster, you'll start the emotional change required to embrace testing across the organization.

My favorite place to start, is the Top Landing Pages report (or Top Entry Pages if that's what your vendor calls it) from your web analytics tool.

Find the biggest loser, the one with the highest bounce rate.

Click and look at the sites sending traffic to this page, look at the keywords driving traffic to this page. That will give you clues about customer intent (where people come from, and why).

Come up with two different (bold) ways to represent that page and deliver on that customer intent.

Your first A / B / C test.

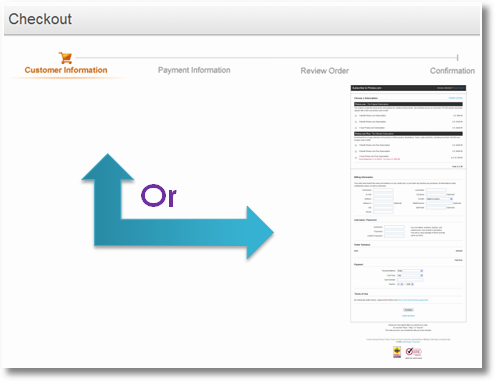

# 2 Test a Single Page vs. Multi Page Checkout.

One of the highest ways to improve conversion is to reduce Cart & Checkout Abandonment rates. Take money from people who want to give you money!

Some websites have a one page checkout process: Shipping, billing, review and submit.

Some have it on four pages.

I have seen both work, you never know, it really depends on the types of visitors you attract.

So if you have a single page why not try the multi (if your abandonment rate is high, say more than 20% :). Or vise a versa?

I have seen very solid improvements in these tests.

Or here's a bonus. Many shopping cart (or basket to my British friends) pages have a Apply Coupon Code box. This seems to case people to open Google and search for codes. So why not move this coupon code box to the Review Before Submit page?

It won't send those who don't have a coupon code looking for one, and by the Review Order page they are way too committed. For those that have a coupon code they can still apply it.

In both these scenarios you are helping your organization find value quickly by touching a high impact area.

And remember, this works for lead submission forms and other such delights.

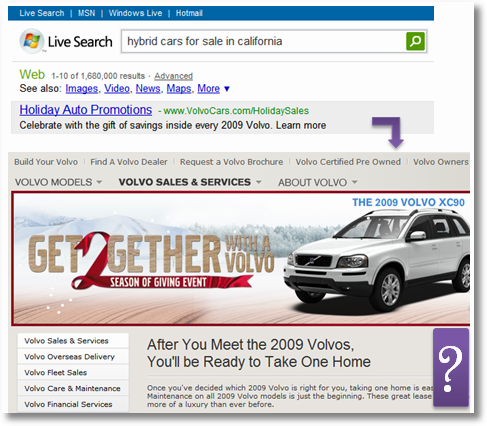

# 3 Optimize the Number of Ads & Layout of Ads.

Ad supported sites are numerous. And the there is so little restraint, the core idea seems to be let's slap as many ads on the site as we can.

More ads = more clicks = more revenue.

Usually this is never tested.

[I can't read Norwegian so this could be wrong, but I counted a total of 19 ads on this page! Ten above the fold. Important point: American sites are just the same.]

So test the number of ads you should have on a page. Its not that hard. It can be a simple A/B test or a MultiVariate test.

In a memorable test the client actually reduced the number of ads on the page by 25% and the outcomes improved by 40%. I kid you not, 40%. And guess in which version customers were happier.

There is a built in assumption there that you are simply not selling impression, in which case pile on the ads in the pages. You are not being held accountable for outcomes so enjoy the ad party.

Here's a bonus idea.

There are sites were the ad is in the header, it takes up the whole header and is the first thing that loads. I have only seen one case where that worked.

The header takes up 30% of the space above the fold on a 1024 resolution.

So if that is you why not try a test with the header ad and without? See which one improves overall conversion / outcomes?

The other bonus idea is to try different ad layouts. Most people have banner blindness, top of the page and in the middle of the content (as in Yahoo news).

Why not try different layouts and formats? If not to see which one works the best then to just annoy your customers? :)

# 4 Test Different Prices / Selling Tactics.

You can of course test different pretty images, why not try to reinvent your business model using testing?

A company was selling just four products. But the environment got tough, the competitors got competitive. How to fight back? Some "genius" in the company had an idea "Why don't we give our cheapest product, currently $15, away for free?"

CMO says: Radical idea. CEO says: Are you insane? CFO says: No way!

Now it did present a fundamental challenge, no one like to give revenue up. And people worried about how successful it would be, what would be the revenue impact, why would anyone buy a non-free version etc etc.

Rather than create prediction models (with faulty assumptions!) or giving up in face of the HiPPO pressure, the Analytics team just launched a A/B test. And they controlled for risk (after all the CFO did not want to go bankrupt) by doing a 95% control and 5% version A test.

Perhaps unsurprisingly the free version of the product sold lots of copies.

That was not surprising.

What was surprising was that free helped shift the sku mix in a statistically significant way, i.e the presence of free caused more people to buy the more expensive options. Interesting. [In a delightfully revenue impacting way!]

The other positive side effect was to cause lots of new customers to be introduced to the franchise, as they "purchased" the free version. Lovely.

Here are some bonus ideas.

If you give discounts try 15% off vs $10 off (people tend go for the latter! :)).

Try $25 mail in rebate vs $7 instant rebate (or change amounts to suit).

You get the idea.

# 5 Test Box Layouts, DVD Covers, Offline Stuff.

Let's say you are launching a new product or a dvd or something similar. You want to figure out what layout might be more appealing to people in stores.

You could ask your mom to pick a version she likes.

You could ask your mom to pick a version she likes.

You could ask your agency to ask a few people.

Or you could launch a test online and see which version is rated highest by your website visitors!

I have done tests for DVD covers and the results were surprising.

Or here's another idea…

You are a multi channel customer. You sell bikinis. Now you want to sell Accounting Software. Why not try it on your website before you reconfigure your stores?

Or you are Wal-Mart and it is expensive and takes a long time for you to put new products in your stores. That makes it risky to start stocking the "on paper hideous but perhaps weirdly appealing" Zebra Print Occasional Chairs in your store. What if it bombs?

Well why not add it to your site, see if it sells. If it gets 15 positive customer reviews (!!), then you know you have a winner on your hands.

The actual launch process is faster, you can reduce risk, and you don't have to rely on just your company employees (the fashion mavens) from picking winners and losers.

All done.

I hope that you'll find both compelling reasons for starting experimentation and I have managed to stretch your mind beyond "honey let's start testing shopping cart buttons".

There is so much you can do. This recession season buy your CEO the gift that keeps giving, a experimentation and testing tool.

Here's a summary for you. . . .

Five reasons for online Experimentation & Testing:

#1 It’s Not Expensive!

#2 Six And A Half Minutes. That’s it!

#3 Show ‘em You Are Worth It.

#4 Big Bets, Low Risks, Happy Customers.

#5 Tags, CMS, Reports & Regressions: All Included!

Five off the beaten track Experimentation & Testing ideas:

#1 Fix The Biggest Loser, Landing Page. (& Be Bold.)

#2 Test a Single Page vs. Multi Page Checkout.

#3 Optimize the Number of Ads & Layout of Ads.

#4 Test Different Prices / Selling Tactics.

#5 Test Box Layouts, DVD Covers, Offline Stuff.

Ok now its your turn.

What are the reasons your company is not jumping on the awesome testing bandwagon? If it did, what finally convinced them? If you are doing testing, care to share some of your ideas? Anything off the beaten path you have tried? Any massive failures?

Please share your feedback, insights and stories.

Thank you.

PS:

Couple other related posts you might find interesting:

Via

Via

From an agency point of view – I've found that testing is a much tougher "sell" than analytics. I don't really comprehend why, but it seems that people are either very shy about testing, or they are flat-out against it. Most everyone who reads this blog knows about the greatness of experimentation (and GWO), but something seems to get lost in the translation process to site owners / marketing directors.

Perhaps a bold statement like "Experiment or Die" is what I need to start saying to get people on board? People seem to get it (now) as far as Web Analytics goes – that's a no brainer…a program like Google Website Optimizer is just as expensive ($0!), why is there such resistance out there towards the concept? Are site owners so emotionally attached to particular colors or buttons or headers on their sites that they could not fathom the idea of experimenting something?

P.S. I really like the "controlled experiment" / "low risks" part – that even reassures me, and I don't even own a website :).

… make sure to segment your results!

Look at temporal, environmental, source & behavior segments & sub-segments. You'll find the web is a highly dynamic place and not "one size fits all."

Keep the faith Avinash!

My corporation hasn't been testing because we don't know exactly what to test for. We are mostly a support site for informational purposes. The coupons we offer are all based off our direct mail campaigns, so it is harder to sway management to change them or test. Hopefully after we implement 4Q, we can get some great data that might "rock the boat" a little more. Thanks for the post Avinash – you always get the gears turning in my mind.

Here is a WordPress plugin that works with the website optimizer.

http://websiteoptimizer.contentrobot.com/

I haven't tested it yet, but your article is giving me a boost. My reason for not testing it is that I run Adsense based websites and not actual shops or websites that require an action

+++++++++++++++++++++++++++++++++++++++++++++++

Razvan:

I am also quite fond of this plugin for integration with wordpress:

http://www.impressionengineers.com/wordpress/easy-google-optimizer-plugin/

I have tested it and it works well.

Thanks.

Avinash.

Avinash, thank you for this important post.

What I often find is a reality in which organizations contract design services – especially interaction design. As a result, page layouts and the look & feel of landing pages are set during the concept development phase. The consulting firm leaves around deployment time, and there is no one to take charge of testing variations.

Progressive companies will pay for some iterations of user validations, but there is always a real budget pressure to release asap and cut costs. I have yet to see a project plan that seriously accounts for sufficient exploration and testing, and have to fight for it time and again. It is not that clients don't see the value, but they don't want to pay unless it is seriously broken.

It takes significant time and labor (=$$$) to determine and preserve patterns of consistent interaction and visual design approach and the variations possible. The efforts can be significantly bigger when you are dealing with a multi-national presence where one needs to account for many stakeholders as well as contrasting cultural sensibilities.

I am sure that for those companies with in-house interaction teams, or at least a dedicated interface designer, the possibilities you cover are extremely beneficial.

I see a gap in the interaction design discourse when it comes to web analytics (and testing for optimization). Analytics is regarded as a 'post' event, not as something you can be proactive about during the design process. What I hope to see is more dialog between the user experience community and the web analytics community around practical ways to integrate the type of testing you call for.

Now all that is well and good. But the sad thing in a common mistake people make is get excited and then go try to test Add To Cart buttons. Or three different hero images on the home page.

Great Post, as usual :-)

This post will be quite useful for me actually as it gives some key points and key sentences to convince people on testing things.

The company I'm working is a PPC agency, and they are now turning into a publishing company. Although, they are very good in PPC, I believe they are just 5 years behind for publishing, and it's being hard to open their eyes to testing and web analytics in general (they have a few websites already, but never set up a goals on Google Analytics). I see that as a great opportunity though.

Thanks for your post Avinash. It will probably be useful.

Great post, Avinash, it's encouraging to see the continued emphasis on testing as a must-have vs. nice-to-have!

A few thoughts – I think the reason there is a much higher resistance to testing relative to analytics is because testing is not something you can turn on and then pat yourself on the back for a job well done. It requires a constant resource to design, run, and analyze a roadmap of tests. Also, any marketer or company that makes the decision to test essentially has to commit to the reality of "failing" at least once! If I'm playing devil's advocate, I would imagine that it can seem easier to just pay an agency to create a single landing page and consider that task completed. Of course, the flip side is that companies will enjoy many more successes if they continue to test iteratively.

I think there needs to be a redefinition of what success really means in a test. It cannot just be lift in your KPIs. I've seen many tests that fail, but make the customer go, "Oooh…now I understand." I consider those learnings a success as well.

I agree that testing bold alternatives is certainly better than wasting your time on tiny changes your visitors won't notice. However, I get scared thinking about a testing newbie trying to tackle the big and technically complex test first! I would advocate balancing 3 different factors in designing that first test: 1) make dramatic changes, 2) consider the lift potential, 3) weigh the technical and political challenges. Each of those can make a a big impact on how the rest of the company perceives and accepts testing.

Again, great post!

Avinash,

The reason why I'm not doing A/B testing is that it's too complex to start.

It's definitely not 6.5 minutes as you promised.

And most likely it's not even 6.5 hours.

The A/B Web Site Optimizer demo that I watched few months ago didn't address the most complex part of A/B testing — wring javascript for the pages that would be tested.

Basically it kicks the responsibility of writing such script to the web developer.

Well, I am a web developer. But it wasn't obvious how to start writing that script.

I think that if I spend a day or two researching the issue — then I would find out how to approach writing that script, but it's definitely not easy.

I hope that would help you to identify and fix that issue that causes users don't even try A/B testing.

Your title couldn't been more accurate in this uncertain economic period. Less people will shop for less money. It will be more important than ever to "Take money from people who want to give you money!"

Keep up the good work Avinash.

BTW, I can see VG, our biggest Norwegian newspaper, has made an impression on you from your visit here in Norway. :-)

Joe: I think I should write a post titled "how to sell experimentation and testing".

I humbly believe that we have been selling it wrong. We sell the technology part of it or we sell the measurement part of it or we sell elements that boggle people's minds ("multivariate regressions of ten to the power of ninety two combinations" – What!) or things to that effect.

What we should sell, or I sell, is. . . .

+ the power to prove ideas (and create a true ideas democracy)

+ the power to liberate Designers and Creative folks to try their wildest dreams (things that would have been crushed by hippos)

+ the power to stop guessing what the customers want and just involve them

+ the power to figure out how wrong you are, fast (!)

+ the power to make money (if all else fails use this, how is it possible that you refuse the option to make more money!)

Something like that. Very different from vendor pitches I see, at clients or at conferences (I saw a testing vendor do a pitch a couple weeks back and I wanted to cry).

Scott: My my last job some of the most effective use of testing was on the tech support site (for a software vendor). Every improvement reduced tech support phone calls! :)

I remember we started with how search results appeared when you searched for something. Then we tried to make the FAQ itself more readable (using ajax). Then we focussed on a few different designs of the main top navigation on the site. Finally it was time to "slash and burn" the home page and try different ways to get people to the biggest problems in one click.

Just some ideas.

And you are right on track with using 4Q, your customers will then tell you what to fix. The sweetest gift of all.

Jonathan: How could I have forgotten that! It was my "big post" last month. :) You are absolutely right.

Ezra: You point to a very valid, and it is a problem. I work with Agencies all the time and they run into this "billable hours" problem as well.

My suggestions to them are two fold. 1) Try not to sell the entire planet on the first go. Suggest a simple test, something sufficiently robust, maybe just on one page. 2) See if you can do the first one for free, just to prove the value.

That latter suggestion 8 times out of 10 results in a positive outcome where the client "get's it" and in the future pays for this type of stuff (and so a longer term revenue stream for the Agency / Interaction Designer).

I do realize not everyone (Agencies / Designers) can afford to do this and the above does not take care of the last problem you outline (gap between interaction design and web analytics discourse – but we have to start some where).

Philippe: You should tell them that in my humble opinion not having Goals set up in GA (or any other tool) is a "crime against humanity"! It is that important. :)

Lily: Excellent points!

Right after I show my HiPPO slide in my presentations I have a slide with these words, in 70 font size: "Learn to be wrong. Quickly."

Also see my personal experience on how to frame testing, in reply to Joe. I really think you can't start at the bottom, then everything is about saving money. You have to convince the Top (in their language) then not doing testing seems like the dumber option. :)

Yes we can!

Dennis: I think there might be a misunderstanding at your end. Possibly?

The javascript tag will be provided by the Website Optimizer tool. Please see this:

http://www.google.com/support/websiteoptimizer/bin/answer.py?answer=71362

You have two versions of the page (yes you have to do this work and I did not include this in 6.5 minutes) and if you have then login, setup, qa and launch will take six minutes. I have done it many times (and I have one hundredth the technical skills you have!).

Hope this helps.

-Avinash.

Hi Avinash,

Its very nice to do the experiment. I thought that it like to create the rules or formula for getting success by mixing other chemicals.

We have implemented idea number 4 and 1. Also I liked the Second. We will test it.:)

It is very nice post as usual.

Thanks,

Bhagawat.

Avinash,

The reason why we have not used GWO multivariate or test is that our site is 100% dynamic and virtually 100% long-tail in its nature.

From what I have seen and from talking directly with Google, there is no way to easily setup testing that scales across many dynamic pages since the changes are applied post JSP/PHP/.NET response. Everything is controlled at an individual page-level.

We have over 60000 products and 3000 manufacturers in our site and the behavior of the products and companies is very chaotic over time. Additionally, the n on a page-level is too low to be relevant for us in any meaningful amount of time.

Please advise how you think that we could apply GWO to dynamic pages with scale.

Hi Avinash,

In our experience, testing has been hard to sell. Clients are usually very open and excited about the idea of testing and optimizing, however, when it comes down to paying for consulting services and the priority of the project internally, the excitement gets cooled down.

I think the market is getting more and more educated and we'll see testing become a part of the marketing mix.

Humberto

@MichaelFreeman

It works fine with dynamic pages. You can dynamically add the WSO tags to the product page you care about or add the WSO tags to your global template and test global changes like buy now button or CSS styles.

Avinash,

To expand on some of the previous posts – learning to be wrong is really hard and uncomfortable for many a Hippo.

I have run into two other roadblocks repeatedly. #1 – trying to get too many answers with one test. Accept the limitations of an A/B test (or multivariate) and work with it. Plan a second round. Keep it simple, or you will end up with a lot of data that tells you nothing.

#2 – Accept differences. The urge to keep everything in a template format stifles so many opportunities. It is possible to stay within a Style Guide and still get stronger performances from each page. Good designers can help you get there. Developers have to be willing to play along.

I agree with Lily – testing isn't something that is done. It's something you have to keep doing. Instead of feeling overwhelmed by that, embrace it and use it to challenge designers and copywriters.

And one more thing. No test will ever work if you don't really know what your objective is in the first place. ECommerce sites are one thing – those of us without shopping carts often have to deal with the moving target of success.

Avinash – I think your comment on how to sell testing is very telling on just how nascent the the industry is today. For example, if I had a really great coffee maker to sell, I would be talking about its strengths, like how it enhances the roast or is very easy to clean, features that differentiate it from competitors. I wouldn't have to sell people on the merits of coffee itself. With testing, it seems that many people are not convinced that it's even worth it yet! It's definitely a challenge for those of us on the vendor side because we have to balance evangelizing testing with also emphasizing how we are, in fact, different from other products out there.

Form an SEO point of view, I know testing is very important, but it truly is hard to sell to the client. For a website that is already getting good traffic the natural next step is to make the client convert better. of course this is where landing page optimization comes in. Getting the client on board (even though they know they should at some point) is tough. Tough because it requires then to spend designer time, programmers time etc.

Great article, Avinash.

One way that I reduce risk, in my client's eyes, is to let them know that testing is temporary. If a new design performs worse than the old design, then its only the duration of the test and the previous page can be easily restored once it is over.

Not only do you "Learn to be wrong. Quickly." but you also are wrong only temporarily. However, the benefits from being "right" last beyond the period of the test. There's a possibility of a small downside or a big upside.

I do have to take issue with how this article glosses over how important statistical significance is and assumes that marketers will become knowledgeable about testing, just by doing it.

"You will hear super lame arguments about mathematical purity or my factorial is better and the other guy’s whatever. Ignore. It will take you a while to hit those kinds of limits. And the nice thing is by then you’ll be smart enough to make up your own mind."

I completely agree that the underlying math and type of factorial test design are advanced topics and should not be barriers to getting started testing. However, they are not subjects that are clear just from having experience.

I've had numerous clients come to our company because they've tried other products or tried to do it themselves and have failed to see results. The fact that they are still trying to get it right is a testament to their commitment to testing, but at the same time there are a lot of companies who get burned by testing again and again and can't justify more testing.

Trying it out is the first step, but learning how to use it and having proper expectations is essential to ingrain testing as a part of the company culture and make sure it sticks around.

Great post Avinash. I appreciate the focus on experimentation and keeping sight of the goals. Too many people get caught up in executing a perfect test, when the conditions for a perfect test are seldom seen by our users.

Representing the reality of choices the consumer has to make, and figuring out why they do or do not make the choices we want is key to good testing. Thanks!

We find that the biggest challenge website owners face is coming up with better alternatives to test. If they've spent time on their conversion pages, the pages may already represent their best thinking.

The tools are simply tools. It's how you use them that delivers results.

Chris

Michael: Takes a bit more thought but I have used GWO successfully on very dynamic high traffic websites. Here is a page that might be helpful:

GWO: Experimenting With Dynamic Content

If you think you could use some help here is a great option, fixed price consulting from a WOAC:

Get Google Website Optimizer Personalized Assistance

But most important of all is the fact if you find GWO does not work then you should just move to a different vendor. In addition to GWO I have done testing on dynamic sites using Offermatica (from Omniture) and I know Optimost also does it.

Just place a call to those vendors and pick the one that works for you.

For a dynamic site like yours I think there is a lot of benefit to be gained from testing so don't let one vendor stop you!

Kristen: Very good advice Kristen, spoken like someone who has been in the weeds fighting hippo's! :)

Lily: I think you've hit the nail on the head. I think with web analytics vendors we are in the "enhances the roast, easy to clean etc" phase. With testing we have atleast another couple years to go to get there.

Right now I feel that as a testing vendor ecosystem we need to band together and in a joint voice evangelize the customer value (and prove it).

Then we'll get to the delightful stage where we (vendors) can finally start to fight and argue with each other about features etc. Nirvana!

Jaan: I don't know if it is important from a SEO point of view (the googlebot does not have any feelings! :)). But certainly from a customer experience and conversion improvement points of view I humbly believe testing is mandatory.

I concur with you that it is hard to sell, we all have to just try.

-Avinash.

Great post — and excellent comments and observations.

For those people who are having trouble selling Testing within their organizations, this might help:

Here is how Obama used Conversion Rate Optimization to maximize online donations. In an amazing bit of sleuthing, Chris Goward blogs on how the Obama campaign successfully used a Conversion Rate Optimization strategy to maximize online donations.

Read all about it here: http://www.widerfunnel.com/case-study/obama-used-conversion-rate-optimization-to-win#more-267 a and here is an update: http://www.widerfunnel.com/case-study/obamas-home-page-image-test#more-284

Avinash,

Following up on Jaan's point, you see companies spending TONS of money to drive traffic to their sites through paid search efforts, banner ad campaigns, organic search optimization, etc…etc…. But when the users get to the website, if the page hasn't been optimized, hasn't been experimented on to get rid of flaws, hasn't been tweaked, you lose the conversion. All of that money and effort put into driving more traffic to your site is wasted if you can't get the conversion. I personally believe the first step should be to test and optimize your key pages on your site that drive a person toward conversion BEFORE investing in the SEO and SEM and big campaign efforts. What if these campaigns drive an additional 800,000 people to your site in a month? What if optimizing the pages gives you a 10% better conversion rate than if you hadn't optimized? Look at the lost opportunity. So, I say test and optimize before the big spends on driving traffic to your site. In practice, I've seen the big spends placed on traffic drivers even when it's known that there is a huge bounce rate problem on the site….something they plan to fix somewhere down the line.

I believe there is a slide floating around somewhere…maybe on your site….from Ominiture that maybe drives this point.

My 2 cents for the day.

@Raquel

Thank you for those 2 links. I'm one of the people who have problems to convince the office at testing things. Hopefully, those posts will have an impact :-)

@Alice Cooper's Stalker

I think I have a slighty different opinion when you say that we should experiment first, and then invest in traffic. We should do both all the time!

To test and experiment landing pages, you need traffic, and I believe having traffic from different channels is even more insightful. From an SEO point of view, this is KEY factor to success to invest from the beginning in that channel.

@Joe, Scott, Ezra, Humberto:

I believe some of the reasons why testing is a much tougher “sell” than most everything else includes:

1. Many marketers (i.e., those making the decision to test-or-not-to-test) don’t want to be measured, pure and simple. And they don’t want to be measure because their compensation and rewards system is not tied to their company’s ROI metrics. So they prefer to go the “gut feel” route.

2. Site owners / marketing directors (where one would assume the compensation/reward system is properly aligned) who don’t embrace this do so likely because the skill sets required to pull testing off as a strategy (and not as a one-off party trick you can talk about later) are very varied, ranging from strategy to design, copy, coding, etc. and don’t usually (ever?) reside within one company – and with surplus capacity.

Hence companies like ours (www.WiderFunnel.com) are in demand as we provide turnkey experiment services. We address the “this ‘billable hours’” problem” Avinash talks about through fixed fees so one gets nickled-and-dimed.

3. Not knowing what to test is a HUGE reason why companies don’t test, or stop after the first test (usually a test of a red button — yes, that one is a no-brainer). Also, not knowing what to test coupled with a powerful tool like GWO, often translates into poorly designed experiments that take months and months to complete. Now, that is off-putting.

4. Another reason for lack of adoption has to do with the expected lift in conversions vs. cost of experimentation. This speaks to Humberto’s “when it comes down to paying for consulting services and the priority of the project internally”.

Let's be realistic: in some cases, there isn’t enough traffic and/or margin to support investing in experiments beyond some basic do-it-yourself stuff (i.e., the six and a half minute experiment Avinash mentions). You can download a calculator here: http://www.widerfunnel.com/proof/do-the-math and determine how much your company can afford to invest on Conversion Rate Optimization based on the expected Lift.

@Scott:

“My corporation hasn’t been testing because we don’t know exactly what to test for”… We are presenting a free webinar next week entitled “How to Evaluate Your Landing Pages and Reach Maximum Conversions” where we will teach how to developed hypotheses for conversion rate lift. Email me at Raquel.Hirsch@WiderFunnel.com and I will send you the registration info.

@Avinash:

IMHO, the only way to “sell” testing is to demonstrate the upside through conservative models showing the impact on revenues vs. the associated costs.

After all, this *is* what testing is all about: making more money from improved conversions.

Analytics is a ‘post’ activity with “recommendations for further action” (i.e., an expense with a potential upside if the recos are implemented properly), whereas Testing and Optimization most often more than pay for themselves right on the initial effort through improved conversion and the attendant revenue and margin associated with it.

Love your blog, Avinash!

@ Raquel

Definitely agree with you on conversion lift vs cost. Managing the clients expectations is key because the test has a probability of being non-conclusive or that it will actually yield worse results than the original (not that we want it to, but it is a possibility).

Thanks for your feedback.

Hmm…"testing vendor ecosystem" sounds ambitious :)

You've inspired me to take a stab at it though. I just created a group called weboptimization – can we start a revolution?

http://groups.google.com/group/weboptimization

Working for an ad supported website, your #3 was interesting. Yet, I have tried to set this very test up in google website optimizer and its pretty much impossible to do so, because the outcomes are numerous (clicking on any ads), and you can only have one conversion point.

How did you do this with google website optimizer? Or did you use another tool?

This was a great post Avinash and why I stress to all of my clients that you have to look at the complete picture. Experiment with SEM to find keywords that convert and then optimize for SEO. Use web analytics and test to improve. For most I've found that with just one or two test it more than pays for the $70 – 120k range that most MVT vendors charge. For those who have SMB Google Website Optimizer is fine as you described. Think about online marketing as a complete circle, and not just a component. How do I get visitors to my website, what are they doing on my website, and how can I improve upon what they're doing on my website.

I tell you why I myself don't do much a/b testing is that I have spent so much time and effort creating our website and it has high text content. To change it would really mean another redesign (altho that is something I think is required every so often anyway)I would want it to be quite drastic, far less text, more slimlined if you like.

The big problem that for me in this respect is realted to the actual serps themselves. Its almost like if you are ranking well you feel things are very sensitive and you are reluctant to change anything that may damage that natural search traffic.

I dont want to believe that links etc alone can have the strength to keep you with good rankings as oppose to effort of creating text content so, well, as I say, very reluctant to change things in a major way even tho it could be so desirable.

You may do the testing that has immediate obvious conversion improvements, percentage wise, so you change the design; only for a month later to lose your most prominent pages from the top of the serps.

Don't you see this as a problem for a services or information type site?

I think that usability testing ties into this great discussion because if the purpose of testing is to improve the effectiveness of the user interface and yield higher conversions, we have an opportunity to tie the testing Avinash writes about, to usability testing. While there are a number of obstacles that relate to how organizations and agencies engage and plan UI projects, these can be removed overtime with adaptation of best practice that handshakes front-loading usability testing with back-loading conversion testing.

It is now common to include usability testing in most UI re/design projects, an effort that only a few years back was mostly reserved for those with in-house staff or those with the resources to pay for what used be a very expensive qualitative exercise. In 2006 for example, one of my clients paid over $15K for 2 days of usability lab we rented in Chicago. Was this a good investment? I am not sure. The overall concept we were taking with the design was validated by the small sample participants. That infused confidence in the multi-million dollar rebranding effort. But there was not way to quantitatively test the effectiveness of most screen flows before the staging phase.

Fast-forward a couple of years later, usability professionals have tools that enable much cheaper usability testing and access a larger, geographically diverse sample of users for their feedback. However, usability testing, while cementing confidence in the proposed prototype, is still mostly a qualitative affair, and often, the only test focused on the effectiveness of the UI per the desired conversion path. This testing triggers the ‘green light’ for client acceptance and coding.

A testing gap is often opened when UI consultants are used. A common scenario: After UI signoff, the interaction team is scaled back, finishing up UI specifications for the development team because the ‘winning’ layouts, screen-flows and designs were established in the qualitative usability tests. The visual design team focuses mostly on finalizing style guides and Photoshop files of assets that were blessed (signed-off) by top management. The in-house development team is eager to take over the consultant team and start coding. Actual development may take several months, often outsourced and without any further UI guidance on board. It sounds crazy, after all the money spend on the UI design, but not uncommon never the less.

By the time the site is launched the user interface has been tweaked and weakened. Disappointing conversions can sometimes be attributed to the skill gap that opened as described above. The UI is tested quantitatively, but the testing is done by web analytics professionals. I think it makes sense for web analytics and usability professionals to team-up and build best practices around qualitative and quantitative testing of the user interface throughout its life cycle. A unified usability/testing model has a chance of becoming mainstream.

Another argument for the C-level types is that testing is a great way to put an end to arguments over opinion that take up hours of meetings and are ultimately irreconcilable. Problem is, I think this is also part of the resistance: people cling to their opinions and don't want to be proven wrong. Sadly, some people would rather see their site fail than be wrong. Personally, I'm wrong on a daily basis, and I revel in it :)

Ezra makes a great point – on the usability side we have a few definitions of "testing", and they've gotten a bit muddled. I think one-on-one user testing is now accessible enough that it's a great tool for getting qualitative information. Walking a few real people through your site can be fantastic for getting those eye-opening "A-ha!" moments where you see that site from an outside perspective. Those a-ha moments then become great fodder for split and multivariate testing – in my experience, one process drives the other.

As for it being cost and time-effective, I'm sorry, but those are excuses in 2008. I use testing extensively for even relatively small clients on fixed budgets with no in-house staff. Testing drives real results and ROI improvements, and if you're spending money to drive traffic but aren't spending time and money to convert that traffic, you're missing out.

Avinash,

I asked that question about dynamic parameters in URL and got the answer:

——

http://groups.google.com/group/gwotechnical/t/f42b2e2a18de8bfc

GWO will "merge" any URL parameters present in your "A" URL and pass them along to your "B" URL also.

——

I also learned that:

===

Your "original" page can (and usually is) used as your "A" page.

===

Thanks!

I finally reached the conclusion that targeting must come before testing. To tell someone to test a page means you are telling them that their instincts are wrong. Their instincts probably are wrong, but nobody wants to hear that.

When you bring up targeting, you can suggest that the page is a compromise, and that another version of offer, content, etc. may be "more right" for a subset of their traffic – existing customers, PPC traffic, geo.

Then you can ask "what might we change if we can imagine ourselves as that *type* of visitor. Those ideas become concepts for targeted delivery, and it introduces the concept of tuning the experience, which inevitably leads to testing.

Plus it has the side benefit of being valuable.

It has been a while Avinash, and it is good to see you are still carrying the flag!

And, with a nod to the Eisenbrothers and Mendez, testing should start with evaluating all elements of a page for relevance to the individual that is visiting. If the article, product, or service is the core of the page, then everything surrounding the page should be evaluated for relevance to that core asset or the goal of the visitor.

Avinash, what a great post! I specifically like the second half where you discuss the "off the beaten path" ideas. These aren't always the most intuitive ways of thinking about user experience problems/solutions but once you hear them it's like a light bulb went off (the coupon "trick", online product testing examples were nice).

On the contrary, I think your promise of a 6.5 minute setup time for website optimizer is bogus. You should put in a disclaimer that both A/B pages should be built out and published prior to the test (and for MVT all imagery should be uploaded as well). This gives no real time for "proper" hypothosis review or asset creation.

Those pieces of website testing are often the most difficult to navigate in larger organizations where different teams own the different parts neccessary to orchestrate a successful test (site branding vs. technology deployment vs. etc).

[…] If you only have time to read one article please read this one! Without experimentation you get stagnation, and no innovation. And there is no better place to test ideas than online. Experiment or Die. Five Reasons And Awesome Testing Ideas. […]

Avinash –

Great write up. If you remember that video session we did in SF for the JMP conference… The TQM Network has been promoting "expirements" (DOE) for quite a while. Very nice job at explaining benefits and simple entry points for the on-line world. Thanks.

eric

Great comments as always. It takes the right person internally to champion testing. I pushed our former owner for 4 months before we finally started testing landing pages – the results spoke for themselves and now we test/measure everything. Great information as always!

not for posting obviously – just fyi: "Q&A" means "questions and answers," no same thing as "QA," which means "Quality Assuarance" (which is what you meant, I believe)

+ + + + + +

You are right! Thanks. Fixed in the post. -Avinash.

Well written.

I've been all about promoting "expirements" for a long time now. Good job pointing out the advantages and good places to start for big old world wide web.

Thanks.

We are ready to test… Only concern is for the guest Web site experience. I have not read anything on what the visitor to the Web site thinks when they click on the test page only to return later in the session and discover a different page. Does A/B testing address this issue? Is the test page static once the session begins and the user returns?

Plow Boy: You have identified one key element in developing the test strategy, and your point has implications well beyond the web visitor's experience.

The best testing tools use cookies to ensure two things:

1. that the web visitor's experience remains constant, and

2. That no matter how many times the web visitor returns to the tested page, we can track the eventual conversion and credit the one variations s/he saw.

Yeah!

Except for one thing, we always tell our readers NOT to test the 'highest bounce rate' entry page first (unless you're spending a bucketload sending traffic to that page, in which case, test it fast.) Instead, go as far down the conversion funnel as you can and still get conclusive results (enough conversions per 20-30 day period that the stats are worth looking at) which is usually a cart, product page, or lead generation form page.

Pick the page that's already doing not so badly. Make sure it's one the office politics won't be a problem with if you have test results that indicate a change is needed. Test that page. Then test your way back up to the start of the conversion path… ultimately up to the landing page.

Why? It's all about the bottom line. If you can get, say, a 20% bump for a key conversion page that's already getting solid conversions, that's a nice fat bump to the bottom line. Great testing ROI. Whereas a 100% bump for a page with incredibly bad conversion rates still leaves you with … slightly better but still incredibly bad conversion rates. In marketing, it's all about ROI.

Later, when you've proven testing's worth to management, maybe then you can fiddle around with those high bounce pages and have no one complaining that you're wasting valuable time.